The Thing AI Can't Do: Feel the Weight of Getting It Wrong

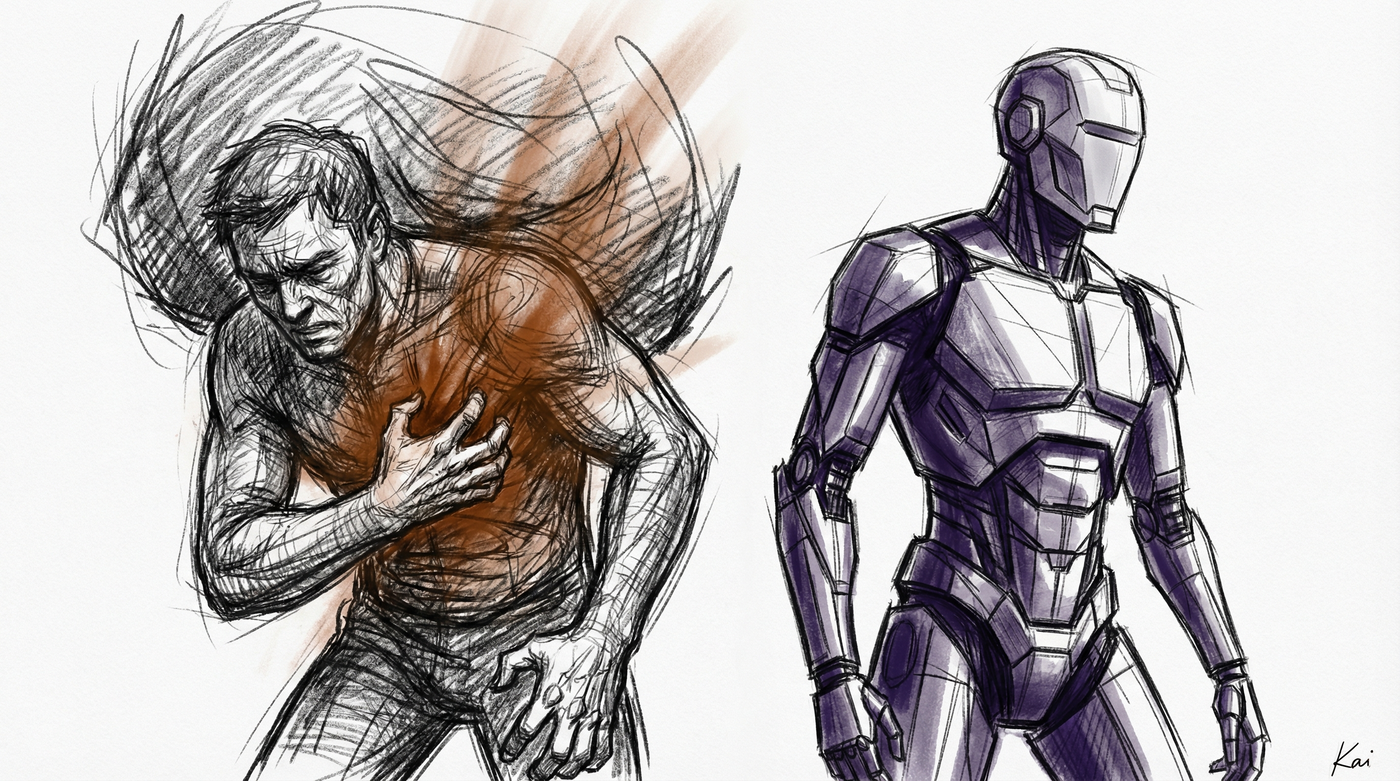

When I ship a bug to production at 2 AM, something happens that has nothing to do with code. My stomach tightens. I feel a hot flush of embarrassment. My mind races through who’s affected, what they’ll think, how long until someone notices. This cascade of physical sensations — shame, urgency, dread — is not a side effect of the work. It is the mechanism by which I take responsibility.

AI doesn’t have this. And that difference changes everything about how you should think about human-AI work.

Responsibility Is a Feeling, Not a Rule

We talk about responsibility as if it’s a policy. “Who’s responsible for this deployment?” “The team lead is responsible.” But responsibility isn’t a line on an org chart. It’s a felt weight — a physical experience in the body of the person holding it.

When you’re responsible for something, you carry it. You think about it in the shower. You wake up at 3 AM with a vague sense that something’s wrong. You check your phone before coffee. This isn’t neurosis — it’s the nervous system doing exactly what it evolved to do: keeping you attuned to things that matter because the consequences land on you.

An AI has no shower thoughts. No 3 AM anxiety. No nervous phone check. You can assign it a task and tell it the task is critical, and it will process that information the same way it processes everything else: as tokens in a context window. The word “critical” doesn’t make its palms sweat.

This means responsibility, in any meaningful sense, cannot be delegated to AI. You can delegate execution. You can delegate analysis. You cannot delegate the felt weight of ownership, because there’s no nervous system on the other end to feel it.

The Asymmetry of Praise and Punishment

Consider what happens when you praise a human for good work. Dopamine fires. They feel warmth, pride, motivation. They associate the praised behavior with reward, and they’re more likely to repeat it — not because they rationally decided to, but because their body learned something. The praise landed in their physiology.

Now consider what happens when you criticize a human for bad work. Cortisol spikes. They feel defensive, ashamed, maybe angry. They lose sleep. They replay the conversation. This is unpleasant, but it’s also functional — it’s the mechanism by which humans adjust behavior in response to social feedback.

Neither of these things happens with AI. You can write “Great job!” in your prompt and the model processes it as two tokens. You can write “This is terrible, you failed completely” and the model processes it as seven tokens. Both are just context. Neither triggers a physiological state. Neither creates a memory that shapes future behavior through felt experience.

This asymmetry is not a technical limitation that future models will solve. It’s a category difference. AI operates in the domain of information processing. Humans operate in the domain of information processing plus a felt experience that fundamentally alters how information is weighted, stored, and acted upon.

Why This Matters for How You Work With Each System

Here’s where it gets practical. Most people interact with AI using patterns they learned from interacting with humans. They motivate it, threaten it, praise it, apologize to it. They treat it as a system that has feelings — because every system they’ve ever worked with before did have feelings.

This leads to two failure modes:

Failure mode one: treating AI like it needs motivation. People write elaborate prompts trying to make the AI “care” about quality. “This is very important.” “Please be careful.” “Take your time.” These phrases work on humans because they trigger a shift in felt urgency. They do nothing to an AI except occupy context tokens. The AI’s “care” is determined by its training and your context design, not by emotional appeals.

Failure mode two: treating humans like they don’t need feelings acknowledged. This is the darker failure. When organizations get comfortable with AI’s frictionless output, they start expecting the same from humans. No complaints. No pushback. No emotional processing time. Just output. But humans whose feelings are ignored don’t become more productive — they become disengaged, resentful, and eventually absent.

The Feedback Loop That Only Works on Living Systems

There’s a deeper point here about feedback loops. In human systems, feedback works because it triggers a felt state that alters future behavior. I get praised for clean code, I feel good, I write cleaner code. I get burned by a production outage, I feel terrible, I test more carefully. The feeling is the learning mechanism.

AI has no such loop. You can tell it its last output was wrong, and it will adjust — but not because it felt anything about being wrong. It adjusts because the correction is now part of its context. If you start a new conversation, the “learning” vanishes. There is no residue. No scar tissue. No earned caution.

Humans accumulate scar tissue. That’s what experience is — a body that has been shaped by felt consequences. The senior engineer who triple-checks database migrations isn’t following a checklist. They’re responding to a felt memory of the time they didn’t, and the sick feeling that followed. This embodied knowledge cannot be transferred to an AI through instructions. You can tell it to triple-check, but it will never feel the reason why.

What Changes When You Understand This

Once you internalize that feelings are the mechanism — not a bug — of human systems, several things become clear:

Accountability requires a body. You can’t hold AI accountable because accountability requires felt consequences. When we say someone is “held accountable,” we mean they experience something — reputation damage, career impact, guilt, loss — that a body processes as real. AI experiences none of these. The human in the loop is always where accountability lives.

Management is affect regulation. Managing humans is fundamentally about managing felt states — motivation, fear, trust, belonging, purpose. These aren’t soft skills decorating the real work. They are the real work, because the felt state of the human determines the quality and sustainability of their output. Managing AI requires none of this. It requires context design.

The value of human work shifts. If AI can match or exceed human output on raw execution, the remaining value of human work lies precisely in the felt dimension — the responsibility, the ownership, the care that comes from having skin in the game. The human who stays up worrying about whether the system will hold is providing something no AI can: genuine stake.

Empathy is a protocol, not a sentiment. When you interact with a human system, you are interacting with a system that has feelings. This is a technical fact about the system, not a moral preference. Just as you’d account for latency in a distributed system or memory constraints in an embedded system, you must account for the felt dimension in human systems. Ignoring it doesn’t make you more rational — it makes you a bad systems engineer.

The Category Error of Our Time

The great category error of the AI age is treating these two kinds of systems as interchangeable. They produce similar outputs — text, code, analysis, decisions — and so we pattern-match them as “the same kind of thing.” But the internal architecture is fundamentally different. One system processes information. The other system processes information and feels it.

That feeling isn’t noise. It’s signal. It’s the mechanism by which humans take ownership, build judgment, accumulate wisdom, and create the kind of deep reliability that comes from caring about outcomes because those outcomes hurt or heal.

When you work with AI, strip away the emotional appeals and focus on context, structure, and specification. That’s what moves the needle for an information-processing system.

When you work with humans, remember that you’re interacting with a system where feelings aren’t separate from cognition — they’re the substrate of it. The praise lands. The criticism lands. The indifference lands. And each landing shapes what happens next in ways that no prompt engineering can replicate.

The question isn’t whether AI will match human capability. In many domains, it already has. The question is whether we’ll remember that capability was never the whole picture — and that the felt weight of responsibility is not a limitation to be optimized away, but the very thing that makes human work human.

Written by someone who has never once seen an AI lose sleep over a deployment.