Aggregation Is the Context Game: Why Scattered Information Kills AI Performance

In my previous post on context before prompt, I argued that context engineering matters more than prompt engineering. But that raises an obvious follow-up question: what makes context good?

The answer, I’ve found, is aggregation.

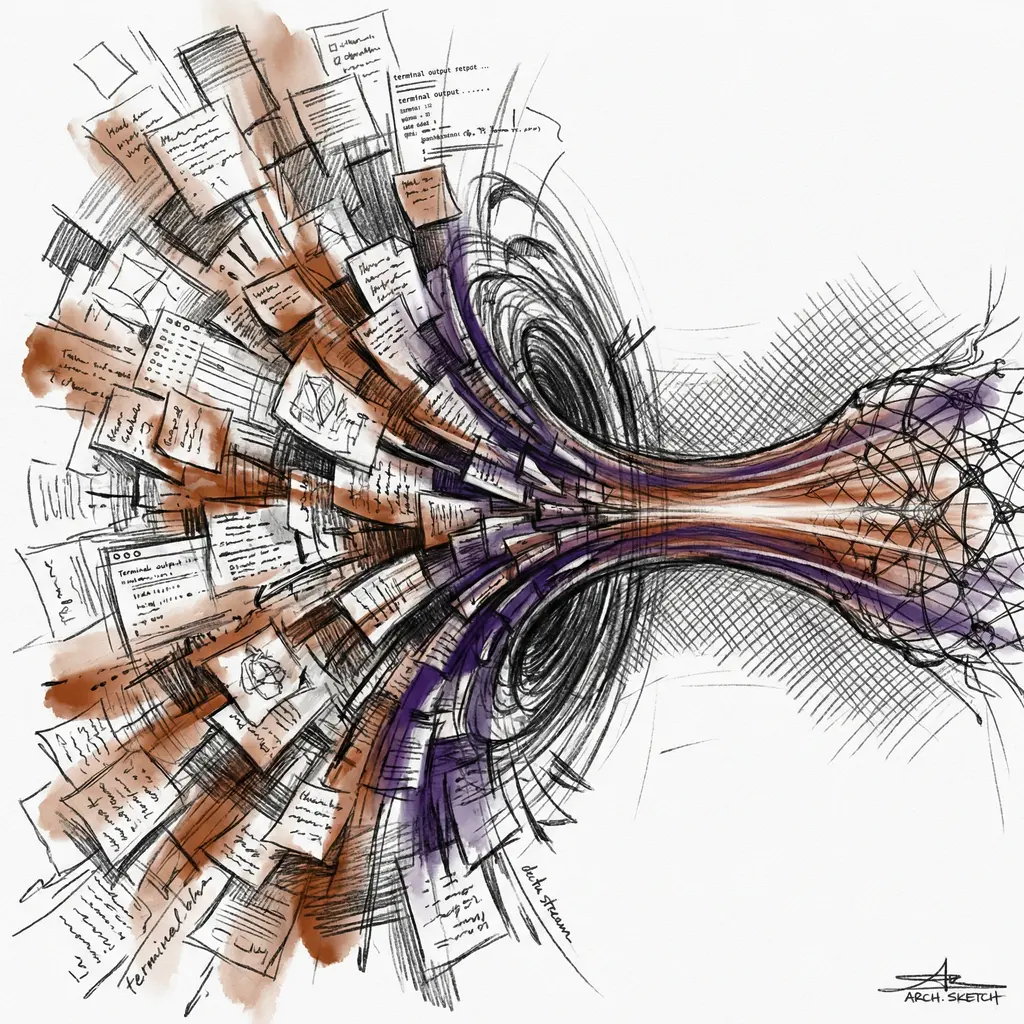

The Scatter Problem

Most people who work with AI have their information scattered across dozens of locations. Project specs in Confluence. Conversations in Slack. Code in Git. Decisions in email threads. Architecture diagrams on someone’s whiteboard. Meeting notes in Google Docs. Requirements in Jira tickets.

When you sit down with an LLM, what do you actually provide? A fraction of what matters. You type what you remember, which is always incomplete, biased toward recent events, and stripped of the nuance that lived in the original context.

The model gets a keyhole view of a panoramic landscape. And then you’re surprised when it misses something obvious.

Aggregation as a First-Class Engineering Problem

In infrastructure, we learned this lesson decades ago. You don’t debug distributed systems by reading individual log files on individual servers. You aggregate logs into a central system — ELK, Graylog, Loki — where you can search, correlate, and see patterns that are invisible at the individual level.

The same principle applies to LLM context. Scattered information produces scattered results. Aggregated information produces coherent, informed results.

But almost nobody treats aggregation as an engineering problem when working with AI. They treat it as copy-pasting. Open this doc, grab that section, paste it into the chat. That’s not aggregation — that’s manual labor with a high error rate.

What Good Aggregation Looks Like

Good context aggregation has specific properties:

Comprehensive — It captures all relevant information, not just what you remember right now. Your memory is lossy and biased. A well-aggregated context isn’t.

Structured — Raw information dumped into a context window is noise. Aggregated information needs organization: what’s the current state, what are the constraints, what decisions have been made and why, what’s been tried and failed.

Current — Stale context is worse than no context. If your aggregated information includes outdated decisions or resolved issues, the model will reason about a world that no longer exists.

Deduplicated — Redundant information wastes context window space. If the same constraint appears in three different documents, the aggregated version should mention it once.

Prioritized — Not everything is equally relevant. Good aggregation puts critical information first, supporting details later. Context windows have limits, and even within those limits, models attend more to some positions than others.

The Compound Effect of Bad Aggregation

Here’s what most people don’t realize: the cost of bad aggregation compounds with every interaction.

If your context is missing a critical constraint, the model produces a solution that violates it. You catch this, correct the model, and it adjusts. But now its context includes the wrong solution, your correction, and the adjusted solution — three things where one would have sufficed if the constraint had been present from the start.

Over a long conversation, these corrections accumulate. The context fills with patches and pivots instead of clean, forward-moving work. You end up spending context window budget on error recovery instead of actual progress.

I’ve seen conversations where 60% of the token budget went to correcting mistakes that would never have happened with properly aggregated context upfront.

The Practical Toolkit

So how do you actually aggregate well? A few patterns I’ve found effective:

CLAUDE.md and Project Files

If you’re using Claude Code (or similar tools), project-level context files are your first aggregation layer. Everything the model needs to know about the project — architecture decisions, conventions, deployment processes, recent changes — lives in one place that gets loaded automatically.

This isn’t documentation for humans. This is aggregated context for the model. Different audience, different writing style. Be specific, be current, be direct.

Memory Systems

Persistent memory across conversations solves the temporal aggregation problem. Without it, every conversation starts from zero, and you re-explain the same context over and over. With it, the model accumulates understanding over time, like a colleague who’s been on the project for months.

The key insight: memory should store decisions and reasoning, not just facts. “We use PostgreSQL” is less useful than “We chose PostgreSQL over MongoDB because our access patterns are heavily relational and we need ACID transactions for the billing pipeline.”

Automated Context Assembly

The best aggregation is automated. Before the model even sees your prompt, systems can:

- Pull relevant code files based on the task

- Retrieve recent git history for affected modules

- Load relevant documentation

- Include test results and CI status

- Attach related conversation history

This is what separates toy AI usage from production AI workflows. The human types a short prompt. The system assembles a rich, comprehensive context around it. The model works with full situational awareness instead of guessing at the gaps.

Pre-Session Briefings

For complex work, I write a briefing before starting. Not a prompt — a context document. Current state of the system. What’s been tried. What the constraints are. What good looks like. Recent decisions and their rationale.

This takes ten minutes and saves hours of mid-conversation corrections. It’s the context engineering equivalent of reading the runbook before touching production.

The Organizational Dimension

This isn’t just a personal productivity trick. At the organizational level, aggregation determines whether AI adoption actually works or just produces expensive noise.

Teams where knowledge is scattered across people’s heads can’t build good AI workflows. The AI can only work with what it’s given, and if nobody has aggregated the tribal knowledge, the AI operates without it.

The organizations getting the most from AI are the ones that already had good knowledge management practices. Wiki culture, decision logs, architecture decision records (ADRs), runbooks. They didn’t build these for AI — they built them because good engineering requires shared context. AI just amplified the payoff.

And the organizations struggling with AI? They’re the ones where critical information lives in the heads of three people who’ve been here since 2015. No amount of prompt engineering fixes that. You can’t aggregate what was never written down.

The Uncomfortable Implication

If aggregation is the game, then the real work of AI effectiveness is boring.

It’s writing things down. Keeping documentation current. Structuring information. Building systems that pull relevant context automatically. Maintaining knowledge bases. Recording decisions and rationale.

None of this is sexy. None of it fits in a LinkedIn post about “10x productivity with AI.” But it’s where the actual leverage lives.

The people getting extraordinary results from AI aren’t better prompters. They’re better aggregators. They’ve built systems — technical and habitual — that ensure the model always has what it needs to think clearly.

The Meta-Lesson

There’s a pattern here that extends beyond AI: the quality of any reasoning system’s output depends on the quality of the information it reasons over. This is true for human brains, for LLMs, for organizations, for scientific communities.

Garbage in, garbage out isn’t just a cliche about data pipelines. It’s a fundamental law about intelligence itself. And aggregation — the deliberate, structured collection and organization of relevant information — is how you fight entropy’s tendency to scatter, fragment, and degrade the information that intelligent systems depend on.

Context windows will keep getting larger. Models will keep getting smarter. But the fundamental constraint won’t change: a model can only reason about what’s in its context. And what’s in its context depends entirely on how well you’ve aggregated.

Build the aggregation layer. Everything else follows.